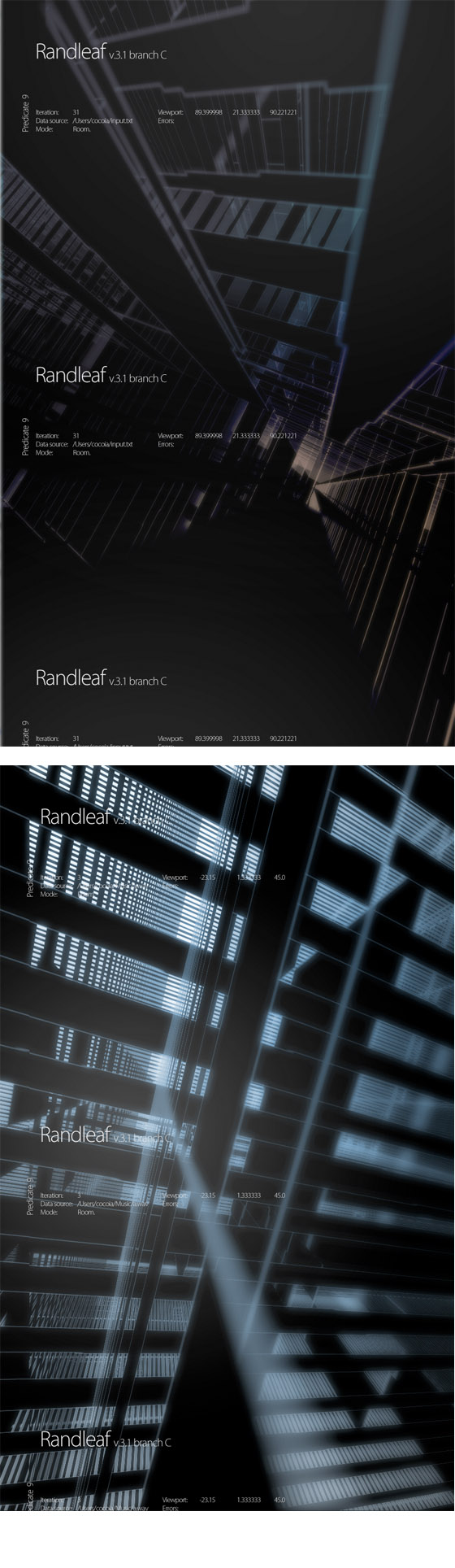

Okay, I’ve finally settled on a name. Randleaf is a modular 3d and 2d compositing graphic generator, for now quite slow. With the additions I made to the code today (gradient background and some other visualization tweaks), I am proud to say Randleaf has entered a whole new phase with it’s new graphics modes.

These two samples show a new, dynamic PNG overlay (great to trace back what I did) and gradient background support, which, in my opinion, adds a lot to the whole effect. Of course, I picked some good samples out of the 80-or so renders, but it does show what direction I am going.

I’ve had a lot of verbal reactions and discussions in the last few days over my novel ‘application’ that generates three-dimensional abstract imagery. The most prominent design feature I wanted to see was the generation of something I once had a ‘monopoly’ on in terms of tutorials; the so-called ‘tech circles’.

You can’t escape them in abstract art. Seemingly randomly generated, these circles feature all sorts of strange cutouts and details, sometimes text or small illustrations, and always layered. My whole adventure into OpenGL began a month ago, when I saw a movie; Ghost in the Shell 2. It had this completely cool way to visualize a computer-(more specifically, a firewall)’s running state. I won’t go into detail as to how it looks, I’d prefer you look at a screenshot (it’s actually FAR better in the movie, do check it out, after the ‘Dollhouse’ scene)

As you can see, it’s quite aesthetically pleasing. Stop to consider how this would look if this were to visualize, say, network activity, or my idea, firewall logs. This would be almost like iPulse in 3d (and ohh, do I love iPulse!). So, just blurting out in all honesty, I thought that was so horny, I just had to learn OpenGL and do something like this. I immediately ran into the obvious ‘it’s harder than it looks’, then entered the ‘ah, do I really have to do this with OpenGL?’ phase, and eventually settled with just picking up a good book. I made a horrible first mockup with a kqueue for checking the logs and a custom view. It wasn’t that great. So, I abandoned it for a while, working on my prime projects (Praetorian, iSight Expert) and well, messed with OpenGL in my spare time. Looked into Python application, the Cocoa / Quartz side of things, and got more interested. I am now considering to pick up a book on Quartz programming.

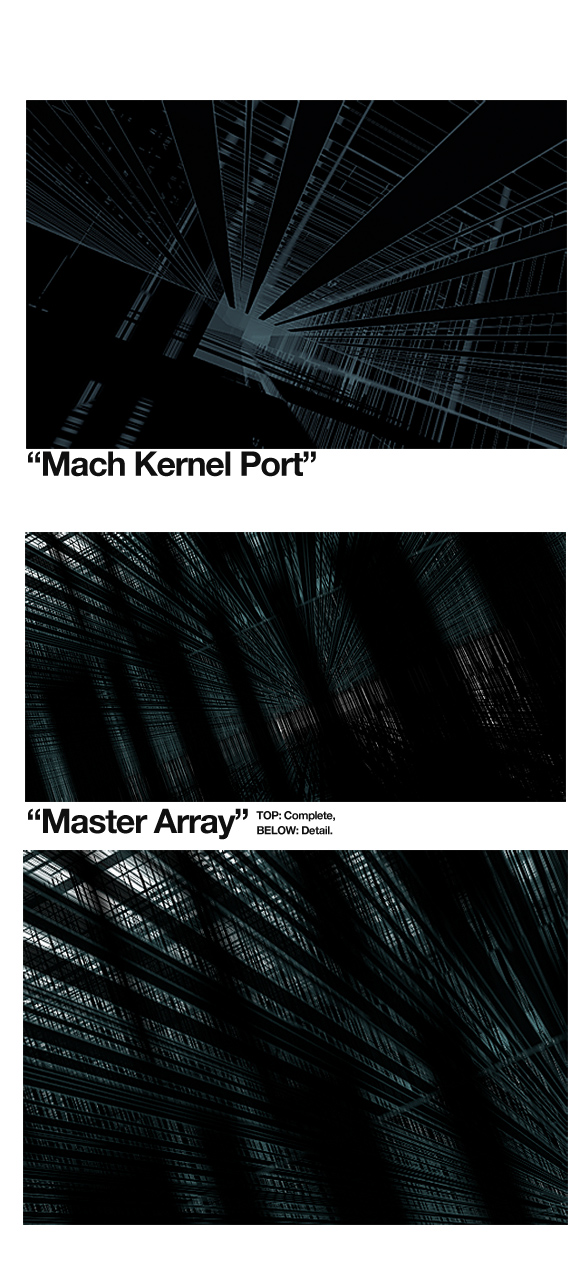

Back on topic; it’s been creating an array of objects and randomly assigning attribute values that brought me to these landscapes. After a while, you feel like testing your hardware and you just expand the array to see how far you can go. Fun ensued.

Now, having made my first real worthy GL-powered app, I want to expand on it’s functionality. I am doing expirimental hi-res (2000 px high and above) rendering, and trying to get the whole thing a bit more modular to make things like an external rendering application possible, and I want it’s product to be a more complete graphic. I could change some objects at random into letters and “+” marks, but that doesn’t really cut it for me (besides, they get swamped by the countless other objects or gruesomely distorted, which I can help but refuse to). In any case, I’ve tried to get more control on my produce, and it’s getting there… I now have the ability to magically dissolve a ‘room’.

The text and few ‘2d’ additions, as you can see, have been added with very sophisticated scripts. No, actually, it’s just a PNG with some lines and dots on it with text being changed per rendered frame. It will be quite trivial to just build a library of 2d graphics that this program can just apply on demand, but I am really looking for something that will generate me some 2d – like those tech circles. I hope I’ll round up a solution for that in the next month. Any thoughts are welcome, as usual.

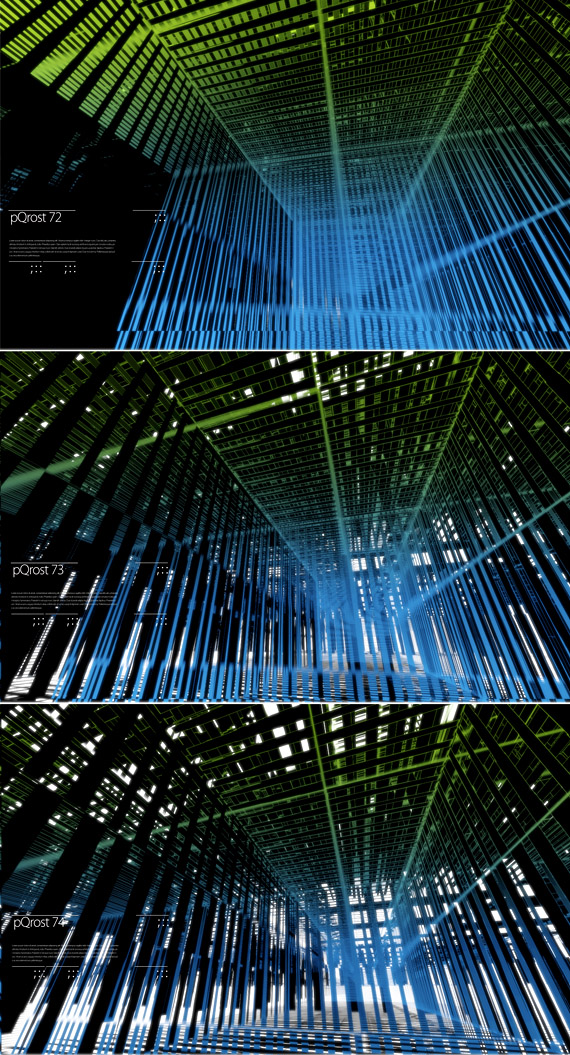

Wohoo, even more purdy images. I just had to show this one. It’s purrrrdy.

Dear iSight Expert and Praetorian Beta testers, there are some good things in the pipeline! I think I’ll do some updates tomorrow or in the next few days, perhaps release a definitive roadmap to releases.

My humble apologies for the extremely salt-less post I did yesterday, I really didn’t want to offend anyone with taste. It’s there for the stay. No more words on that.

Here is some homework I literally ‘let do’ in my sleep. Like I said to a classmate over IM; “it’s like a tiny Chinese fellow in a black box with Maya 6 and a keyboard shortcut for matrix extrude on fast-forward”. I’m taking suggestions to name this thing, I can’t seem to come up with anything better than the ‘Purdy-Image-O-Matic™”.

Without further ado, the poster;

It’s great to have the results of as little knowledge of these almighty tools we, as young coders of the ‘lazy’ generation, have — at least, I am just beginning. I haven’t ever used an Apple II. I didn’t write assembly on my 286. I did a lot of work with old stuff to compensate, longing for having been born in that age. It didn’t work out that way, so these days, I am just up in learning everything I think is great for expressing myself or making stuff work the way I want it to work.

Oh, before I forget, that’s a font I had to make for modular typography class. “Acreola”. I’m so carried away in this whole rant that I forgot all about that. What should I do with it? Burn it? Eat it? Give it with every odd copy of my software?

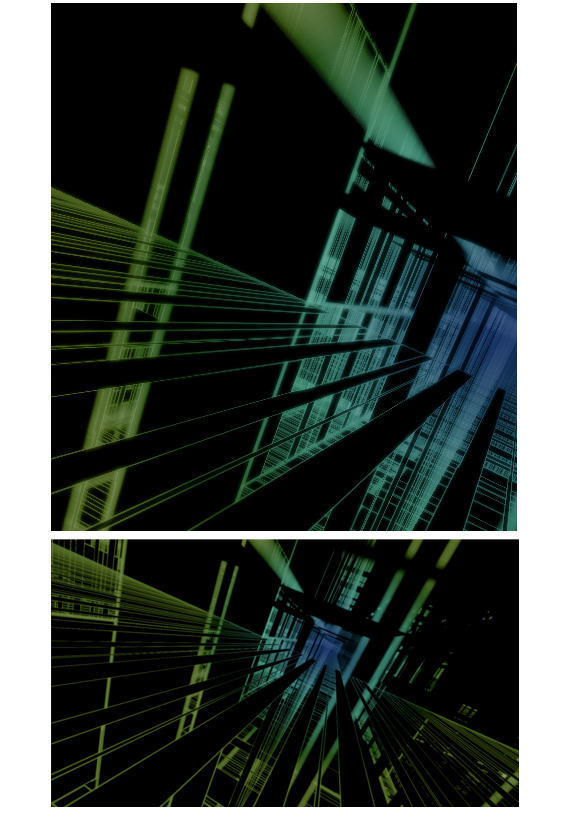

Building further on my experiments with OpenGL.

As you can see, some color modulation and a post-process blur with additive blending does give it an edge. It’s nice, soft, and scenic. It can output a cool 40 images per minute, in it’s current, raw form. I may push it out, once I get things working like ‘true’ 3d scenics with shading.

Because xyz (Nate) asked for some details on this, I’ll happily disclose some. My ‘script’ (it used Python first, now most of it is just bare C++ or Objective-C) receives random input from any source (say, you could pipe your chat log into it, or the contents of your favorite MP3) and processes it into various arrays of data. It then randomly selects values to assign to properties of a hard-coded array of 3d objects, e.g. cubes, planes, and lines and their X, Y, Z positions and distortions. Most data, not being really random, create interesting patterns from strange perspectives. It uses basic lighting for every (simplified), depth testing for overlapping shapes, and depth-of-field (limited and simple). Overall, it looks landscape-like, or like it’s some sort of room or space. I think most outputted images are pretty much industry-grade, I made a mock-up of one of the generated images as a book cover.

For now, it’s just an experiment. Some other cool graphic stuff from my classmate, Jelmar. He’s working on ‘Sixty Pounder’, a great characteristic ‘fat face’ for expressive messages. As quoted from Jules & co;

Jelmar, and me, of course, enjoy feedback like Nate’s. Please, let us know what you want to know, or what you want to see! I’ll upload some wallpaper-sized images (any idea on sizes? I already have 1920×1200 written down). Email me!

Generated with ‘random’ data and the scripts that will soon replace digital abstract artists.

This just rendered for ages. It didn’t have any progress, because, hey, I’m not going to write a specific raytrace renderer in OpenGL, I have no idea how to do that. But this is fun stuff.

Edit: I feel like requests! Send me your ‘idea’ (it can be a few words, like I did here) and I’ll try to generate a random image out of it that is somewhat related. It would be fun to make a weekly out of this.