I have been watching a few TED video’s today (in case you’ve been living under a rock, TED is the Technology, Entertainment, Design conference with some of the brightest and coolest people of this planet attending or ‘lecturing’) which I hadn’t seen before because they didn’t relate directly to me, and what struck me most was a combination of two videos; One, Richard Dawkins’ ‘The Universe is Queerer than we Suppose”, which handled the universe as we see it and the way we are and how these things relate to one each other (you should just watch it, I’m raping it by putting this in my words, I am a great fan of Dawkins’ writing and speech) and Will Wright’s “Toys that Make Worlds”, which is a presentation by Will Wright (-the- Will Wright, the guy who made Simcity, the Sims, and now Spore) about games, specifically Spore, but it made me relate by the games he made previously; Simcity in particular.

What these two presentations made me realize is that interaction with our computers has been incredibly limited. If you take a look at Simcity 2000, and were to imagine it’s actual city planning software, it’s got the most brilliant interface in the world.

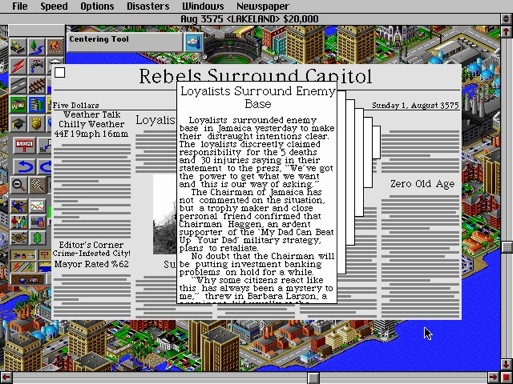

Â Take in mind that humans have been engineered by nature over the course of thousands of years to adapt and optimize their senses and perception to the scale and velocity of the world of humans, not by contrast that of bacteria, atoms, or planets, but humans, it is logical that there is some degree of distance between the representation of information in our mind and the representation of information in a computer program.The way information in our mind is represented and presented on the computer is by means of a model (in real life, cities are sets of buildings logically laid out; the model Simcity is to divide a representation of terrain into squares of a grid to distribute objects (buildings, roads, trees) on); the way information in the computer is presented to us to absorb is often by means of a metaphor or layout (or a combination of these; the Simcity 2000 ‘alert dialog’ for occurrences and things the player was ought to know was by mean of a automatically generated newspaper). All that happens between human and computer thanks to these two is interaction; the interaction in Simcity was ‘painting’ objects on the grid and interacting with the consequences.Â

Take in mind that humans have been engineered by nature over the course of thousands of years to adapt and optimize their senses and perception to the scale and velocity of the world of humans, not by contrast that of bacteria, atoms, or planets, but humans, it is logical that there is some degree of distance between the representation of information in our mind and the representation of information in a computer program.The way information in our mind is represented and presented on the computer is by means of a model (in real life, cities are sets of buildings logically laid out; the model Simcity is to divide a representation of terrain into squares of a grid to distribute objects (buildings, roads, trees) on); the way information in the computer is presented to us to absorb is often by means of a metaphor or layout (or a combination of these; the Simcity 2000 ‘alert dialog’ for occurrences and things the player was ought to know was by mean of a automatically generated newspaper). All that happens between human and computer thanks to these two is interaction; the interaction in Simcity was ‘painting’ objects on the grid and interacting with the consequences.   Models and metaphors help put otherwise hopelessly inhuman abstract things into a more logical and accessible representation. As you can see, this game was optimized to be as accesible as possible, and what generally happens with software in that situation is that it tends to pull the model and metaphor even closer to the real world; in Simcity’s case, it’s easy to forget that the only purpose of it is really just making imaginary cities, not real ones, but one can take another example to prove how models and metaphors relate to desktop applications; Apple’s recent ‘user experience breakthrough’ in backup software, Time Machine, is almost ‘game-ey’ in it’s appearance and interaction.

Models and metaphors help put otherwise hopelessly inhuman abstract things into a more logical and accessible representation. As you can see, this game was optimized to be as accesible as possible, and what generally happens with software in that situation is that it tends to pull the model and metaphor even closer to the real world; in Simcity’s case, it’s easy to forget that the only purpose of it is really just making imaginary cities, not real ones, but one can take another example to prove how models and metaphors relate to desktop applications; Apple’s recent ‘user experience breakthrough’ in backup software, Time Machine, is almost ‘game-ey’ in it’s appearance and interaction.  Time Machine has a real-life equivalent of it’s purpose; it’s rummaging through old versions of a place. The starting point was clearly ‘browse old objects’, but within our desktop environment, browsing has been conventionalized by our file manager into list views, icon views, and lately a more ‘real’ flow of visual representations (Cover flow). The model is therefore that an old possession (in your mind) is represented by a file older than now. The metaphor (presenting information back to you) is traveling through incarnations of the container (directory, application, window) of the files. Here is the golden part; the interaction is basically traveling (traveling and not browsing as you are following a single dimension; there is but one path, and that’s the one into the past), which comes as natural for us. No matter how the container of your represented possessions changes, Time Machine can work with it; the interaction is always the same. No matter how much the actual objects change, the system’s models and metaphors can accomodate, like you would be able to back-feed the up-to-date situation of a city into Simcity. This is what should be inherent in high-quality interfaces.Interfaces with a low degree of interaction, such as Adobe Photoshop’s limited metaphor of ‘painting on a canvas’ (which has become all but accurately representative of the image editing process since the addition of 120 tools other than the brush and pencil) are becoming more and more dated. Although the trend of ‘delicious generation’ interfaces is less than favorable (dismissing function for form), I do encourage the processes of interaction we have seen in some less action-packed games being used as inspiration for new, more intuitive and even fun interfaces.

Time Machine has a real-life equivalent of it’s purpose; it’s rummaging through old versions of a place. The starting point was clearly ‘browse old objects’, but within our desktop environment, browsing has been conventionalized by our file manager into list views, icon views, and lately a more ‘real’ flow of visual representations (Cover flow). The model is therefore that an old possession (in your mind) is represented by a file older than now. The metaphor (presenting information back to you) is traveling through incarnations of the container (directory, application, window) of the files. Here is the golden part; the interaction is basically traveling (traveling and not browsing as you are following a single dimension; there is but one path, and that’s the one into the past), which comes as natural for us. No matter how the container of your represented possessions changes, Time Machine can work with it; the interaction is always the same. No matter how much the actual objects change, the system’s models and metaphors can accomodate, like you would be able to back-feed the up-to-date situation of a city into Simcity. This is what should be inherent in high-quality interfaces.Interfaces with a low degree of interaction, such as Adobe Photoshop’s limited metaphor of ‘painting on a canvas’ (which has become all but accurately representative of the image editing process since the addition of 120 tools other than the brush and pencil) are becoming more and more dated. Although the trend of ‘delicious generation’ interfaces is less than favorable (dismissing function for form), I do encourage the processes of interaction we have seen in some less action-packed games being used as inspiration for new, more intuitive and even fun interfaces.

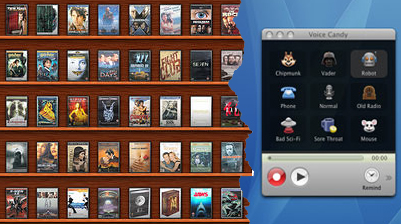

A cliché, but good example is Delicious Library; humans love to collect things and order them around to make them pretty. Once again, you’re creating pretty things with little effort while a very consistent model helps represent objects in your mind on the screen. One could argue it’s almost a game to make it just how you want it. Most people I saw using it felt it had a certain ‘kick’ to it. Less ‘famous’ third-party developers manage to get this right as well; Voice Candy, by the Potionfactory, is a fantastic example of a very visual, straightforward interface that looks like a toy (a very clean one, at that) and works as effortlessly as one (I have come to prefer it over any other app on OS X for audio recording alone).

Â

Tomorrow’s interfaces, in my imagination, carry a lot more of influences from game design and take creative interaction to a new level. We’ve seen it in successful OS X apps, and there is no doubt that we’ll see this trend continue. Dont’ settle for checkboxes and standard widget in your application if you feel there’s really a layer of involvement missing; start brainstorming how you can bring the abstract to life by relating to the world your user lives in, and not the space in which the program resides.

Comments are closed on this blog post.